Hardware Accelerated Video Playback in Moonlight

David Reveman has just completed a series of optimizations in the Moonlight engine that allows Moonlight to take advantage of your GPU for the data intensive video rendering operations. This is in addition to the standard GPU hardware acceleration that we debuted a few weeks ago.

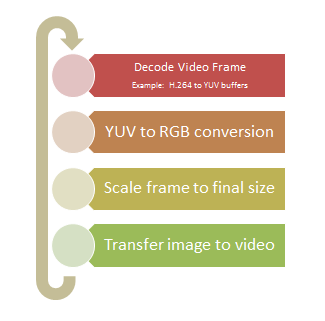

This is what the video rendering loop looks like in Moonlight:

Every one of those steps is an expensive process as it has to crunch to a lot of data. For example, a 720p video which has a frame size of 1280x720, this turns out to be 921,600 pixels. This frame while stored in RGB format at 8 bits per channel takes 2,764,800 bytes of memory. If you are decoding video at 30 frames per second, you need to at least move from the encoded input to the video 82 megabytes per second. Things are worse because the data is transformed on every step in that pipeline. This is what each step does:

The video decoding is the step that decompresses your video frames. This is done one frame at a time, the input might be small, but the output will be the size of the original video.

The decoding process generates images in YUV format. This format is used to store images and videos but and with previous versions of Moonlight, we had to convert this YUV data into an in-memory bitmap encoded in RGB format.

The final step is to transfer this image to the graphics card. This typically involves copying the data from the system memory to the graphics card, and in Unix this goes through the user process to the X server process, which eventually moves the data to the graphics card.

New Hardware Accelerated Framework

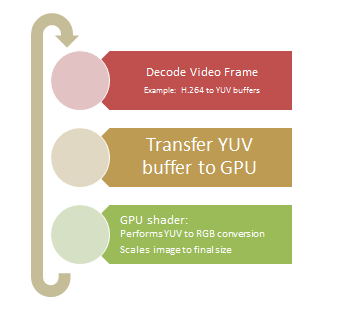

The new hardware acceleration framework now skips plenty of these steps and lets the GPU on the system take over, this is what the new pipeline looks like:

The uncompressed image in YUV format is sent directly to the GPU. Since OpenGL does not really know about YUV images, we use a custom pixel shader that runs on the graphics card to do the conversion for us and we also let the GPU take care of scaling the image.

The resulting buffer is composited with the rest of the scene, using the new rendering framework introduced in Moonlight 4.

Although native video playback solutions have been doing similar things for a while on Linux, we had to integrate this into the larger retained graphics system that is Moonlight. We might be late to the party, but it is now a hardware accelerated and smooth party.

And what does this looks like? It looks like heaven.

We were watching 1080p videos, running at full screen in David's office and it is absolutely perfect.

Getting the Code

The code is available now on Github and will be available in a few hours as a pre-packaged binary from our nightly builds.

Posted on 23 Mar 2011

Blog Search

Archive

- 2024

Apr Jun - 2020

Mar Aug Sep - 2018

Jan Feb Apr May Dec - 2016

Jan Feb Jul Sep - 2014

Jan Apr May Jul Aug Sep Oct Nov Dec - 2012

Feb Mar Apr Aug Sep Oct Nov - 2010

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2008

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2006

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2004

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2002

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Dec

- 2022

Apr - 2019

Mar Apr - 2017

Jan Nov Dec - 2015

Jan Jul Aug Sep Oct Dec - 2013

Feb Mar Apr Jun Aug Oct - 2011

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2009

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2007

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2005

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2003

Jan Feb Mar Apr Jun Jul Aug Sep Oct Nov Dec - 2001

Apr May Jun Jul Aug Sep Oct Nov Dec