Google censors Persspectives

My friend Jon Perr was running a political commentary site, called Perrspectives and using Google adwords to advertise it.

Here is the tale of the adwords censoring his site.

Posted on 23 Jun 2004

One of the best Daily Shows today. Jon Stewart was amazing.

Atom support in LameBlog

Lame Blog now supports Atom using Atom.NET. It supports Mono out of the box (yup, nice Makefile and all), and comes with awesome docs for it. Am still an Atom newbie, so I do not know if I got everything right, drop me a note if my Atom stinks.

It took 10 minutes, so do not expect Enterprise-enabled, transactional based atom feed just yet.

Posted on 21 Jun 2004

Cory Doctorow's talk on DRM

Cory from the EFF went to Microsoft to talk about DRM, here is a transcript which should be mandatory reading.

Joel keeps pumping out the gems.

Most importantly, he follows up with a concrete list of features that the web needs. Now is the time for all good hackers to join the Mozilla development.

A key to the proposal from Joel is that some high-level features must be incorporated into HTML in some form, and not be limited to server-side controls implemented with a particular technology (ASP.NET or Struts or PHP): it should be something that can be placed on a static HTML page.

Posted on 19 Jun 2004

Usenix in Boston

My favorite conference is coming to town in a couple of weeks. Hope to see a lot of folks

Martin has been doing quite a lot of work on the Mono Debugger, getting nicer every day. And it has docs ;-)

Posted on 18 Jun 2004

More Microsoft Open Source

A parser and scanner for VB.NET has been released by Microsoft. It is written in VB.

Manufacturing the Facts.

Kuwait Times: "U.S truck carrying radioactive material caught in Kuwait".

Tehran Times: "The MNA reported for the first time the coalition forces suspicious transfer of WMD parts from Kuwait to Southern Iraq by trucks."

Update: Someone researched this in depth here, and apparently the trucks were coming *out*, not in.

Posted on 16 Jun 2004

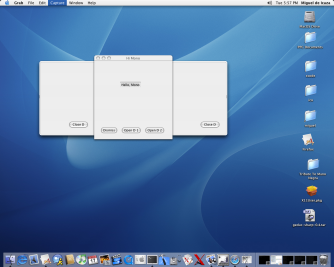

Cocoa Sharp

The Cocoa# developers got their first window showing on the MacOS X, and they also got inheritance working. This is a young binding, but here is a screenshot of what it looks like:

Cocoa# sample application.

Posted on 15 Jun 2004

Web Page Thumbails Sharp!

Ross improved on a script from Matt to do web page thumbnails.

Here is the same written C#, Gtk# and Gecko#.

using System;

using Gtk;

using Gecko;

class X {

static WebControl wc;

static string output = "shot.png";

static string url;

static int width = -1;

static int height = -1;

static void Main (string [] args)

{

for (int i = 0; i < args.Length; i++){

switch (args [i]){

case "-width":

try {

i++;

width = Int32.Parse (args [i]);

} catch {

Console.WriteLine ("-width requires an numeric argument");

}

break;

case "-height":

try {

i++;

height = Int32.Parse (args [i]);

} catch {

Console.WriteLine ("-height requires an numeric argument");

}

break;

case "-help":

case "-h":

Help ();

break;

default:

if (url == null)

url = args [i];

else if (output == null)

output = args [i];

else

Help ();

break;

}

}

Application.Init();

Window w = new Window ("test");

wc = new WebControl ();

wc.LoadUrl (args [0]);

wc.NetStop += MakeShot;

wc.Show ();

wc.SetSizeRequest (1024, 768);

w.Add (wc);

w.ShowAll ();

Application.Run();

}

static void Help ()

{

Console.WriteLine ("Usage is: shot [-width N] [-height N] url [shot]");

Environment.Exit (0);

}

static void MakeShot (object sender, EventArgs a)

{

Gdk.Window win = wc.GdkWindow;

int iwidth = wc.Allocation.Width;

int iheight = wc.Allocation.Height;

Gdk.Pixbuf p = new Gdk.Pixbuf (Gdk.Colorspace.Rgb, false, 8, iwidth, iheight);

Gdk.Pixbuf scaled;

p.GetFromDrawable (win, win.Colormap, 0, 0, 0, 0, iwidth, iheight);

if (width == -1){

if (height == -1)

scaled = p;

else

scaled = p.ScaleSimple (height * iwidth / iheight, height, Gdk.InterpType.Hyper);

} else {

if (height == -1)

scaled = p.ScaleSimple (width, width * iheight / iwidth, Gdk.InterpType.Hyper);

else

scaled = p.ScaleSimple (width, height, Gdk.InterpType.Hyper);

}

scaled.Savev (output, "png", null, null);

Application.Quit ();

}

}

Posted on 14 Jun 2004

On .NET and portability

Jeff posted on portability of Java vs .NET code in an article.

First lets state the obvious: you can write portable code with C# and .NET (duh). Our C# compiler uses plenty of .NET APIs and works just fine across Linux, Solaris, MacOS and Windows. Scott also pointed to nGallery 1.6.1 Mono-compliance post which has some nice portability rules.

Porting an application from the real .NET to Mono is a relatively simple exercise in most cases (path separators and filename casing). A situation that I would like to fix on upcoming versions of Mono to simplify the porting (with some kind of configuration flag).

It is also a matter of how much your application needs to integrate with the OS. Some applications needs this functionality, and some others do not.

If my choice is between a system that does not let me integrate with the OS easily or a system that does, I personally rather use the later and be responsible for any portability issues myself.

That being said, I personally love to write software that takes advantage of the native platform am on, specially on the desktop. I want to take advantage of what my desktop has to offer: Gtk# (when using Linux), Cairo, OpenGL, Cocoa# (when using the Mac), GConf, Gnome Printing, and access to Posix.

If my software uses all of the above libraries, will it be easily portable? Most likely not.

Consider what the iFolder team is doing: they are writing the core libraries as a cross platform reusable component. This component is called "Simias" and provides the basic synchronization and replication services. Simias works unchanged on Linux, MacOS, Windows and Solaris. Like every other cross platform software, it must be tested on each platform, and bugs on each platform must be debugged.

This is not different than doing any other kind of cross platform development.

Then they integrate with each operating system natively at the user interface level. Windows.Forms and COM on Windows; Gtk# on Linux and in the future Cocoa# on the Mac.

Although Gtk# looks and feels like Windows.Forms on Windows, the problem that they were facing is that Gtk# was an extra download for people using iFolder, and they chose to keep things simple for the end user.

Mono as a new Wine?

Although Jeff's post is fairly naive and not that interesting I do have a problem with his follow up: Mono is not just a `Wine-like' technology to emulate an API.

Mono is a complete development stack, with a standard core from ECMA and ISO. Jeff has obviously not written any code with Mono, or he would know that it is quite an enjoyable standalone development platform as oppposed to just a runtime.

Mono is also not limited to run the .NET code, thanks to IKVM: a Java VM written entirely in C# and .NET, we can run Java code just as well as .NET on the same virtual machine, and have them happily talking to each other.

Posted on 11 Jun 2004

Jeffrey in Boston

Nat Interview

Another interview with Nat on the Novell Linux Strategy.

Nat and Dan in Bangalore.

Laura in Kona

Bangalore.

Arturo's Laptop

Arturo is a modern day Indiana Jones, a resourceful humanist. On this picture with his laptop and portable 17" monitor, making up for a hard learned lesson: do not fight gravity with your notebook LCD screen.

Arturo and his C64-like TiBook.

Posted on 08 Jun 2004

On the Google IPO

Summer movies: The Corporation and Farenheit 9/11

Trailers are now available on Rotten Tomatoes, and a list of dates in the US

Can not wait to see Michael Moore's new movie, opens on June 25th!

Venus Transit

Venus Transit on Tuesday.

Posted on 05 Jun 2004

« Newer entries | Older entries »

Blog Search

Archive

- 2024

Apr Jun - 2020

Mar Aug Sep - 2018

Jan Feb Apr May Dec - 2016

Jan Feb Jul Sep - 2014

Jan Apr May Jul Aug Sep Oct Nov Dec - 2012

Feb Mar Apr Aug Sep Oct Nov - 2010

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2008

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2006

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2004

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2002

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Dec

- 2022

Apr - 2019

Mar Apr - 2017

Jan Nov Dec - 2015

Jan Jul Aug Sep Oct Dec - 2013

Feb Mar Apr Jun Aug Oct - 2011

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2009

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2007

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2005

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2003

Jan Feb Mar Apr Jun Jul Aug Sep Oct Nov Dec - 2001

Apr May Jun Jul Aug Sep Oct Nov Dec