State of TLS in Mono

This is an update on our efforts to upgrade the TLS stack in Mono.

You can skip to the summary at the end if you do not care about the sausage making details.

Currently, TLS is surfaced in a few places in the .NET APIs:

- By the

SslStreamclass, which is a general purpose class that can be used to turn any bidirectional stream into an TLS-powered stream. This class is what currently powers the web client in Mono. - By the

HttpWebRequestclass, which provides .NET's HTTP client. This in turn is the foundation for the modernHttpClient, both the WCF and WebServices stacks as well as the quick and dirtyWebClientAPI.

HttpClient is in particular interesting, as it

allows for different transports to be provided for it. The

default implementation in .NET 4.5 and Mono today is to use

an HttpWebRequest-based implementation. But on

Windows 10, the implementation is replaced with one that uses

WinRT's HTTP client.

Microsoft is encouraging developers to

abandon HttpWebRequest and instead

adopt HttpClient as it both async-friendly and

can use the best available transport given on a specific

platform. More on this in a second.

Mono's Managed TLS

Mono currently only supports TLS 1.0.

This is the stack that powers SslStream

and HttpWebRequest.

Last year we started an effort to bring managed implementations of TLS 1.2 and TLS 1.1. Given how serious security has become and how many holes have been found in existing implementation, we built this with an extensive test suite to check for conformance and to avoid common exploits found in implementation mistakes of TLS. This effort is currently under development and you can see where it currently lives at mono-tls module.

This will give us complete TLS support for the entire stack, but this work is still going to take a few months to audit.

Platform Specific HttpClients

Most of the uses for TLS today is via the HTTP protocol,

and not over custom TLS streams. This means that it is more

important to get an HTTP client that supports a brand new TLS

stack, than it is to provide the SslStream code.

We want to provide native HttpClient handlers

for all of Mono's supported platforms: Android, iOS, Mac,

Linux, BSD, Unix and Windows.

On iOS: Today Xamarin.iOS already ships a native

handler,

the CFNetworkHandler.

This one is powered by Apple's CFNetwork stack. In recent

years, Apple has improved their networking stack, and we now I

strongly recommend using Paul Bett's

fantastic ModernHttpClient

which uses iOS' brand new NSUrlSession and uses OkHttp on

Android.

On Android: in the short term, we recommend adopting ModernHttpClient from Paul Betts (bonus points: the same component works on iOS with no changes). In the long term, we will change the default handler to use the Android Java client.

In both cases, you end up with HTTP 2.0 capable clients for free.

But this still leaves Linux, Windows and other assorted operating systems without a regular transport.

For those platforms, we will be adopting the CoreFX handlers, which on Unix are powered by the libcurl library.

This still leaves HttpWebRequest and

everything built on top of it running on top of our TLS

stack.

Bringing Microsoft's SslStream and HttpWebRequest to Mono

While this is not really TLS related, we wanted to bring Microsoft's implementations of those two classes to Mono, as they would fix many odd corner cases in the API, and address limitations in our stack that do not exist in Microsoft's implementation.

But the code is tightly coupled to native Windows APIs which makes the adoption of this code difficult.

We have built an adaptation layer that will allow us to bring Microsoft's code and use Mono's Managed TLS implementation.

SslStream backends

Our original effort focused on a pure managed implementation of TLS because we want to ensure that the TLS stack would work on all available platforms in the same way. This also means that all of the .NET code that expects to control every knob of your secure connection to work (pinning certificates or validating your own chains for example).

That said, in many cases developers do not need this capabilities, and in fact, on Xamarin.iOS, we can not even provide the functionality, as the OS does not give users access to the certificate chains.

So we are going to be developing at least two

separate SslStream implementations. For Apple

systems, we will be implementing a version on top of Apple's

SSL stack, and for other systems we will be developing an

implementation on top of Amazon's new SSL library, or the

popular OpenSSL variant of the day.

These have the advantage that we would not need to maintain the code, and we benefit from third parties doing all the hard security work and will be suitable for most uses.

For those rare uses that like to handle connections manually, you will have to wait for Mono's new TLS implementation to land.

In Summary

Android, Mac and iOS users can get the latest TLS for HTTP

workloads

using ModernHttpClient.

Mac/iOS users can use the

built-in CFNetworkHandler as well.

Soon: OpenSSL/AppleSSL based transports to be available in Mono (post Mono 4.2).

Soon: Advanced .NET SSL use case scenarios will be supported with Mono's new mono-tls stack

Soon: HttpWebRequest

and SslStream stacks will be replaced in Mono

with Microsoft's implementations.

Posted on 27 Aug 2015

Roslyn and Mono

Hello Internet! I wanted to share some updates of Roslyn and Mono.

We have been working towards using Roslyn in two scenarios. As the compiler you get when you use Mono, and as the engine that powers code completion and refactoring in the IDE.

This post is a status update on the work that we have been doing here.

Roslyn on MonoDevelop/XamarinStudio

For the past year, we have been working on replacing the IDE's engine that gives us code completion, refactoring capabilities and formatting capabilities with one powered by Roslyn.

The current engine is powered by a combination of NRefactory and the Mono C# compiler. It is not as powerful, comprehensive or reliable as Roslyn.

Feature-wise, we completed the effort, and we now have a Roslyn-powered branch that uses Roslyn for code completion, refactoring, suggestions and code formatting.

In addition, we ported most of the refactoring capabilities from NRefactory to work on top of Roslyn. These were quite significant. Visual Studio users can try them out by installing the Refactoring Essentials for Visual Studio extension.

While our Roslyn branch is working great and is a pleasure to use, it also consumes more memory and by extension, runs a little slower. This is not Roslyn's fault, but the side effects of leaks and limitations in our code.

Our original plan was to release this for our September release (what we internally call "Cycle 6"), but we decided to pull the feature out from the release to give us time to fix the leaks that affected the Roslyn engine and tune the performance of Roslyn running on Mono.

Our revisited plan is to ship an update to our tooling in Cycle 6 (the regular feature update) but without Roslyn. In parallel, we will ship a Roslyn-enabled preview of MonoDevelop/XamarinStudio. This will give us time to collect your feedback on performance and memory usage regressions, and time to fix the issues before we make Roslyn the default.

Roslyn as a Compiler in Mono

One of the major roadblocks for the adoption of Roslyn in Mono was the requirement to generate debugging information that Mono could consume on Unix (the other one is that our C# batch compiler is still faster than Roslyn).

The initial Roslyn release only had support for generating debug information through a proprietary/native library on Windows, which meant that while Roslyn could be used to compile code on Unix, the result would not contain any debug information - this prevented Roslyn from being useful for most compilation uses.

Recently, Roslyn got support for Portable Program Database (PPDB) files. This is a fully documented, open, compact and efficient format for storing debug information.

Mono's master release contains now support for using PPDB files as its debug information. This means that Roslyn can produce debug information that Mono can consume.

That said, we still need more work in the Mono ecosystem to fully support PPDB files. The Cecil library is used extensively to manipulate IL images as well as their associated debug information. Our Reflection.Emit implementation will need to get a backend to generate PPDBs (for third party compilers, dynamic code generators) and support in IKVM to produce PPDB files (this is used by Mono's C# compiler and other third party compilers).

Additionally, many features in Roslyn surfaced bloat and bugs in Mono's class libraries. We have been fixing those bugs (and in many cases, the bugs have gone away by replacing Mono's implementation with implementations from Microsoft's Reference Source).

Posted on 21 Jul 2015

In Defense of the Selfie Stick

From the sophisticated opinion of the trendsetters to Forbes, the Selfie Stick is the recipient of scorn and ridicule.

One of the popular arguments against the Selfie Stick is that you should build the courage to ask a stranger to take a picture of you or your group.

This poses three problems.

First, the courage/imposition problem. Asking a stranger in the street assumes that you will find such a volunteer.

Further, it assumes that the volunteer will have the patience to wait for the perfect shot ("wait, I want the waves breaking" or "Try to get the sign, just on top of me"). And that the volunteer will have the patience to show you the result and take another picture.

Often, the selfista that has amassed the courage to approach a stranger on the street, out of politeness, will just accept the shot as taken. Good or bad.

Except for a few of you (I am looking at you Patrick), most people feel uncomfortable imposing something out of the blue on a stranger.

And out of shyness, will not ask a second stranger for a better shot as long as the first one is within earshot.

I know this.

Second, you might fear for the stranger to either take your precious iPhone 6+ and run, or even worse, that he might sweat all over your beautiful phone and you might need to disinfect it.

Do not pretend like you do not care about this, because I know you do.

Third, and most important, we have the legal aspect.

When you ask someone to take a picture of you, technically, they are the photographer, and they own the copyright of your picture.

This means that they own the rights to the picture and are entitled to copyright protection. The photographer, and, not you, gets to decide on the terms to distribute, redistribute, publish or share the picture with others. Including making copies of it, or most every other thing that you might want to do with those pictures.

You need to explicitly get a license from them, or purchase the rights. Otherwise, ten years from now, you may find yourself facing a copyright lawsuit.

All of a sudden, your backpacking adventure in Europe requires you to pack a stack of legal contracts.

Now your exchange goes from "Can you take a picture of us?" to "Can you take a picture of us, making sure that the church is on the top right corner, and also, I am going to need you to sign this paper".

Using a Selfie Stick may feel awkward, but just like a condom, when properly used, it is the best protection against unwanted surprises.

Posted on 22 Jan 2015

.NET Foundation: Advisory Council

Do you know of someone that would like to participate in the .NET foundation, as part of the .NET Foundation Advisory Council?

Check the discussion where we are discussing the role of the Advisory Council.

Posted on 01 Dec 2014

Microsoft Open Sources .NET and Mono

Today, Scott Guthrie announced that Microsoft is open sourcing .NET. This is a momentous occasion, and one that I have advocated for many years.

.NET is being open sourced under the MIT license. Not only is the code being released under this very permissive license, but Microsoft is providing a patent promise to ensure that .NET will get the adoption it deserves.

The code is being hosted at the .NET Foundation's github repository.

This patent promise addresses the historical concerns that the open source, Unix and free software communities have raised over the years.

.NET Components

There are three components being open sourced: the .NET Framework Libraries, .NET Core Framework Libraries and the RyuJit VM. More details below.

.NET Framework Class Libraries

These are the class libraries that power the .NET framework as it ships on windows. The ones that Mono has historically implemented in an open source fashion.

The code is available today from http://github.com/Microsoft/referencesource. Mono will be able to use as much a it wants from this project.

We have a project underway that already does this. We are replacing chunks of Mono code that was either incomplete, buggy, or not as fully featured as it should be with Microsoft's code.

We will be checking the code into github.com/mono by the end of the week (I am currently in NY celebrating :-)

Microsoft has stated that they do not currently plan on taking patches back or engaging into a full open source community style development of this code base, as the requirements for backwards compatibility on Windows are very high.

.NET Core

The .NET Core is a redesigned version of .NET that is based on the simplified version of the class libraries as well as a design that allows for .NET to be incorporated into applications.

Those of you familiar with the PCL 2.0 contract assemblies have a good idea of what these assemblies will look like.

This effort is being hosted at https://github.com/dotnet/corefx and is an effort where Microsoft will fully engage with the community to evolve, develop and improve the class libraries.

Today, they released the first few components to github; the plan is for the rest of the redesigned frameworks to be checked in here in the next few months.

Xamarin and the Mono project will be contributing to the efforts to bring .NET to Mac, Unix, Linux and other platforms. We will do this as Microsoft open sources more pieces of .NET Core, including RyuJIT.

Next Steps

Like we did in the past with .NET code that Microsoft open sourced, and like we did with Roslyn, we are going to be integrating this code into Mono and Xamarin's products.

Later this week, expect updated versions of the Mono project roadmap and a list of tasks that need to be completed to integrate the Microsoft .NET Framework code into Mono.

Longer term, we will make the Mono virtual machine support the new .NET Core deployment model as well as the new VM/class library interface

We are going to be moving the .NET Core discussions over to the .NET Foundation Forums.

With the Mono project, we have spent 14 years working on open source .NET. Having Microsoft release .NET and issue a patent covenant will ensure that we can all cooperate and build a more vibrant, richer, and larger .NET community.

Posted on 12 Nov 2014

Mono for Unreal Engine

Earlier this year, both

Epic Games and CryTech

made

their Unreal

Engine

and CryEngine

available under an affordable subscription model. These are

both very sophisticated game engines that power some high end

and popular games.

Earlier this year, both

Epic Games and CryTech

made

their Unreal

Engine

and CryEngine

available under an affordable subscription model. These are

both very sophisticated game engines that power some high end

and popular games.

We had previously helped Unity bring Mono as the scripting language used in their engine and we now had a chance to do this over again.

Today I am happy to introduce Mono for Unreal Engine.

This is a project that allows Unreal Engine users to build their game code in C# or F#.

Take a look at this video for a quick overview of what we did:

This is a taste of what you get out of the box:

- Create game projects purely in C#

- Add C# to an existing project that uses C++ or Blueprints.

- Access any API surfaced by Blueprint to C++, and easily surface C# classes to Blueprint.

- Quick iteration: we fully support UnrealEngine's hot reloading, with the added twist that we support it from C#. This means that you hit "Build" in your IDE and the code is automatically reloaded into the editor (with live updates!)

- Complete support for the .NET 4.5/Mobile Profile API. This means, all the APIs you love are available for you to use.

- Async-based programming: we have added special game schedulers that allow you to use C# async naturally in any of your game logic. Beautiful and transparent.

- Comprehensive API coverage of the Unreal Engine Blueprint API.

This is not a supported product by Xamarin. It is currently delivered as a source code package with patches that must be applied to a precise version of Unreal Engine before you can use it. If you want to use higher versions, or lower versions, you will likely need to adjust the patches on your own.

We have set up a mailing list that you can use to join the conversation about this project.

Visit the site for Mono for Unreal Engine to learn more.

(I no longer have time to manage comments on the blog, please use the mailing list to discuss).

Posted on 23 Oct 2014

.NET Foundation: Forums and Advisory Council

Today, I want to share some news from the .NET Foundation.

Forums: We are launching the official .NET Foundation forums to engage with the larger .NET community and to start the flow of ideas on the future of .NET, the community of users of .NET, and the community of contributors to the .NET ecosystem.

Please join us at forums.dotnetfoundation.org. We are using the powerful Discourse platform. Come join us!

Advisory Council: We want to make the .NET Foundation open and transparent. To achieve that goal, we decided to create an advisory council. But we need your help in shaping the advisory council: its role, its reach, its obligations and its influence on the foundation itself.

To bootstrap the discussion, we have a baseline proposal that was contributed by Shaun Walker. We want to invite the larger .NET community to a conversation about this proposal and help us shape the advisory council.

Check out the Call for Public Comments which has a link to the baseline proposal and come join the discussion at the .NET Forums.

Posted on 14 Oct 2014

Markdown Style Guide

Markdown is as a file format for easily producing text that can be pleasantly read both on the web and while using command line tools, or plain text editors.

Recently, a crop of tools have emerged that deliver some form of WYSIWYG or side-by-side authoring tools to assist writers to visualize the final output as they work.

Authors are turning to these tools to produce documentation that looks good when authoring the document, yet the tools are not true to the spirit and goals of markdown. And in some cases, authors are not familiar with the essence of what makes markdown great, nor the philosophy behind it:

Readability, however, is emphasized above all else. A Markdown-formatted document should be publishable as-is, as plain text, without looking like it’s been marked up with tags or formatting instructions.

Using these editors is the modern equivalent of using Microsoft Word to produce HTML documentation.

The generated markdown files very easy to produce, but are not suitable for human consumption. They likely violate a number of international treaties and probably will be banned by the EU.

This short post is a set of simple rules to improve your markdown.

They will help you deliver delight to all of your users, not just those using a web browser, but also those casually reading your documentation with a file manager, their console, and most importantly, potential contributors and copy editors that have to interact with your text.

Wrap Your Text

The ideal reading length for reading prose with a monospaced font is somewhere between 72 and 78 characters.

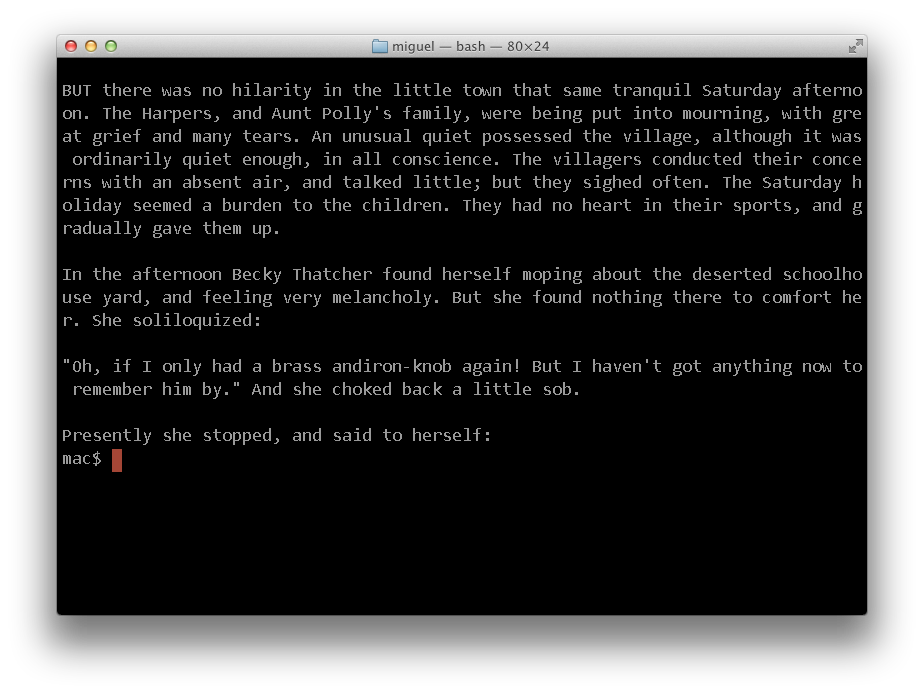

This is text snippet is from Mark Twain's Adventures of Tom Sawyer, with text wrapped around column 72, when reading in an 80x25 console:

It wont matter if you are using a larger console, the text will still be pleasant to read.

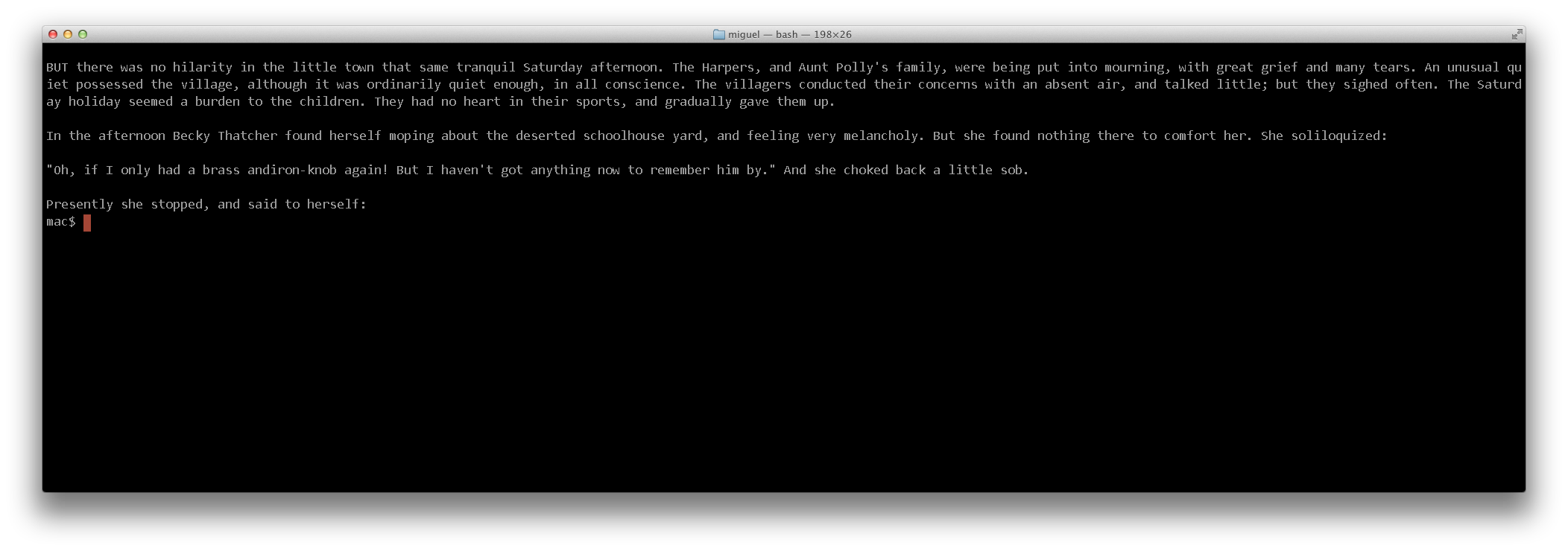

But if you use some of these markdown editors that do not bother wrapping your text, this is what the user would get:

And this is what is more likely to happen, with big consoles on big displays:

There is a reason why most web sites set a maximum width for the places where text will be displayed. It is just too obnoxious to read otherwise.

Many text editors have the ability of reformatting text your text when you make changes.

This is how you can fill your text in some common editors:

- Emacs: Alt-Q will reformat your paragraph.

- Vim: "V" (to start selection) then "gq" will reformat your selection.

- TextMate: Control-Q.

Considering Using Two Spaces After a Period

When reading text on the console, using two spaces after a period makes it easier to see where phrases end and start.

While there is some debate as to the righteouness of one vs two spaces in the word of advanced typography these do not apply to markdown text. When markdown is rendered into HTML, the browser will ignore the two spaces and turn it into one, but you will give your users the extra visual cues that they need when reading text.

If you are interested in the topic, check these posts by Heraclitean River and DitchWalk.

Sample Code

For small code snippets, it is best if you just indent your code with spaces, as this will make your console experience more pleasant to use.

Recently many tools started delimiting code with the "```". While this has its place in large chunks of text, for small snippets, it is the visual equivalent of being punched in the face.

Try to punch your readers in the face only when absolutely necessary.

Headers

Unless you have plans to use multiple-nested level of headers, use the underline syntax for your headers, as this is visually very easy to scan when reading on a console.

That is, use:

Chapter Four: Which iPhone 6 is Right For You. ============================================== In the previous chapter we established the requirement to buy iPhones in packs of six. Now you must choose just whether you are going to go for an apologetically aluminum case, or an unapologetically plastic iPhone.

Instead of the Atx-style headers:

# Chapter Four: Which iPhone 6 is Right For You.

The second style can easily drown in a body of text, and can not help as a visual aid to see where new sections start.

Blockquotes

While markdown allows you to only set the first character for a blockquote, like this:

> his is a blockquote with two paragraphs. Lorem ipsum dolor sit amet, consectetuer adipiscing elit. Aliquam hendrerit mi posuere lectus. Vestibulum enim wisi, viverra nec, fringilla in, laoreet vitae, risus.

Editors like Emacs, can reformat that text just fine, all you have to do is set the "Fill Prefix", by positioning your cursor after the "> " and use Control-x ., then you can use the regular fill command: Alt-Q, and this will produce:

> This is a blockquote with two paragraphs. Lorem ipsum dolor sit amet, > consectetuer adipiscing elit. Aliquam hendrerit mi posuere > lectus. Vestibulum enim wisi, viverra nec, fringilla in, laoreet > vitae, risus.

Lists

Again, while Markdown will happily allow you to write things like:

* Lorem ipsum dolor sit amet, consectetuer adipiscing elit. Aliquam hendrerit mi posuere lectus. Vestibulum enim wisi, viverra nec, fringilla in, laoreet vitae, risus. * Donec sit amet nisl. Aliquam semper ipsum sit amet velit. Suspendisse id sem consectetuer libero luctus adipiscing.

You should love your reader, and once again, if you are using something like Emacs, use the fill prefix to render the list like this instead:

* Lorem ipsum dolor sit amet, consectetuer adipiscing elit.

Aliquam hendrerit mi posuere lectus. Vestibulum enim wisi,

viverra nec, fringilla in, laoreet vitae, risus.

* Donec sit amet nisl. Aliquam semper ipsum sit amet velit.

Suspendisse id sem consectetuer libero luctus adipiscing.

Posted on 30 Sep 2014

Three Tricks in Xamarin Studio

I wanted to share three tricks that I use a lot in Xamarin Studio/MonoDevelop.

Trick 1: Navigate APIs

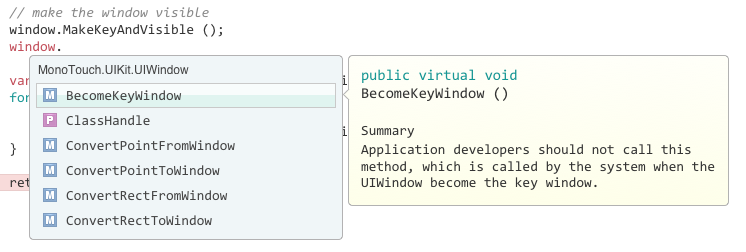

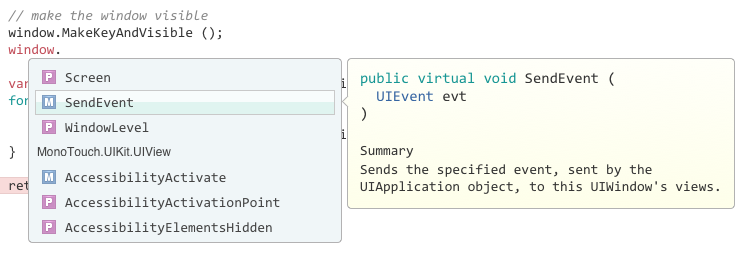

Xamarin Studio's code completion for members of an object defaults to showing all the members sorted by name.

But if you press Control-space, it toggles the rendering

and organizes the results. For example, for this object of

type UIWindow, it first lists the methods available for

UIWindow

sorted by name, and then the cluster for its base class

UIView:

This is what happens if you scroll to the end of the

UIWindow members:

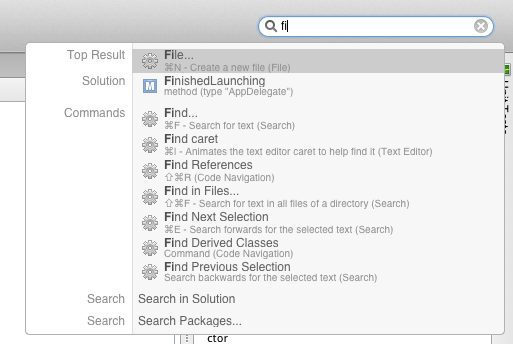

Trick 2: Universal Search

Use the Command-. shortcut to activate the universal

search, once you do this and start typing it will find matches

for both members and types in your solution, as well as IDE

commands and the option to perform a full text search:

Trick 3: Dynamic Abbreviation Completion

This is a feature that we took from Emacs's Dynamic Abbrevs.

If you type Control-/ when you type some text, the editor will try to complete the text you are typing based on strings found in your project that start with the same prefix.

Hit control-/ repeatedly to cycle over possible

completions.

Posted on 20 Aug 2014

Five Cross Platform Pillars

The last couple of years have been good to C# and .NET, in particular in the mobile space.

While we started just with a runtime and some basic bindings to Android and iOS back in 2009, we have now grown to provide a comprehensive development stack: from the runtime, to complete access to native APIs, to designers and IDEs and to a process to continuously deliver polish to our users.

Our solution is based on a blend of C# and .NET as well as bindings to the native platform, giving users a spectrum of tools they can use to easily target multiple platforms without sacrificing quality or performance.

As the industry matured, our users found themselves solving the same kinds of problems over and over. In particular, many problems related to targeting multiple platforms at once (Android, iOS, Mac, WinPhone, WinRT and Windows).

By the end of last year we had identified five areas where we could provide solutions for our users. We could deliver a common framework for developers, and our users could focus on the problem they are trying to solve.

These are the five themes that we identified.

- Cross-platform UI programming.

- 2D gaming/retained graphics.

- 2D direct rendering graphics.

- Offline storage, ideally using SQLite.

- Data synchronization.

Almost a year later, we have now delivered four out of the five pillars.

Each one of those pillars is delivered as a NuGet package for all of the target platforms. Additionally, they are Portable Class Libraries, which allows developers to create their own Portable Class Libraries on top of these frameworks.

Cross Platform UI programming

With Xamarin 3.0 we introduced Xamarin.Forms, which is a cross-platform UI toolkit that allows developers to use a single API to target Android, iOS and WinPhone.

Added bonus: you can host Xamarin.Forms inside an existing native Android, iOS or WinPhone app, or you can extend a Xamarin.Forms app with native Android, iOS or WinPhone APIs.

So you do not have to take sides on the debate over 100% native vs 100% cross-platform.

Many developers also want to use HTML and Javascript for parts of their application, but they do not want to do everything manually. So we also launched support for the Razor view engine in our products.

2D Gaming/Retained Graphics

Gaming and 2D visualizations are an important part of applications that are being built on mobile platforms.

We productized the Cocos2D API for C#. While it is a great library for building 2D games -and many developers build their entire experiences entirely with this API- we have also extended it to allow developers to spice up an existing native application.

We launched it this month: CocosSharp.

Offline Storage

While originally our goal was to bring Mono's System.Data across multiple platforms (and we might still bring this as well), Microsoft released a cross-platform SQLite binding with the same requirements that we had: NuGet and PCL.

While Microsoft was focused on the Windows platforms, they open sourced the effort, and we contributed the Android and iOS ports.

This is what powers Azure's offline/sync APIs for C#.

In the meantime, there are a couple of other efforts that have also gained traction: Eric Sink's SQLite.Raw and Frank Krueger's sqlite-net which provides a higher-level ORM interface.

All three SQLite libraries provide NuGet/PCL interfaces.

Data Synchronization

There is no question that developers love Couchbase. A lightweight NoSQL database that supports data synchronization via Sync gateways and Couchbase servers.

While Couchbase used to offer native Android and iOS APIs and you could use those, the APIs were different, since each API was modeled/designed for each platform.

Instead of writing an abstraction to isolate those APIs (which would have been just too hard), we decided to port the Java implementation entirely to C#.

The result is Couchbase Lite for .NET. We co-announced this development with Couchbase back in May.

Since we did the initial work to bootstrap the effort, Couchbase has taken over the maintenance and future development duties of the library and they are now keeping it up-to-date.

While this is not yet a PCL/NuGet, work is in progress to make this happen.

Work in Progress: 2D Direct Rendering

Developers want to have access to a rich API to draw. Sometimes used to build custom controls, sometimes used to draw charts or to build entire applications based on 2D rendered API.

We are working on bringing the System.Drawing API to all of the mobile platforms. We have completed an implementation of System.Drawing for iOS using CoreGraphics, and we are now working on both an Android and WinPhone implementations.

Once we complete this work, you can expect System.Drawing to be available across the board as a NuGet/PCL library.

If you can not wait, you can get your hands today on the Mac/iOS version from Mono's repository.

Next Steps

We are now working with our users to improve these APIs. But we wont stop at the API work, we are also adding IDE support to both Xamarin Studio and Visual Studio.

Posted on 20 Aug 2014

« Newer entries | Older entries »

Blog Search

Archive

- 2024

Apr Jun - 2020

Mar Aug Sep - 2018

Jan Feb Apr May Dec - 2016

Jan Feb Jul Sep - 2014

Jan Apr May Jul Aug Sep Oct Nov Dec - 2012

Feb Mar Apr Aug Sep Oct Nov - 2010

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2008

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2006

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2004

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2002

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Dec

- 2022

Apr - 2019

Mar Apr - 2017

Jan Nov Dec - 2015

Jan Jul Aug Sep Oct Dec - 2013

Feb Mar Apr Jun Aug Oct - 2011

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2009

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2007

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2005

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2003

Jan Feb Mar Apr Jun Jul Aug Sep Oct Nov Dec - 2001

Apr May Jun Jul Aug Sep Oct Nov Dec