Mono Performance Team

For many years a major focus of Mono has been to be compatible-enough with .NET and to support the popular features that developers use.

We have always believed that it is better to be slow and correct than to be fast and wrong.

That said, over the years we have embarked on some multi-year projects to address some of the major performance bottlenecks: from implementing a precise GC and fine tuning it for a number of different workloads to having implemented now four versions of the code generator as well as the LLVM backend for additional speed and things like Mono.SIMD.

But these optimizations have been mostly reactive: we wait for someone to identify or spot a problem, and then we start working on a solution.

We are now taking a proactive approach.

A few months ago, Mark Probst started the new Mono performance team. The goal of the team is to improve the performance of the Mono runtime and treat performance improvements as a feature that is continously being developed, fine-tuned and monitored.

The team is working both on ways to track performance of Mono over time, implemented support for getting better insights into what happens inside the runtime and has implemented several optimizations that have been landing into Mono for the last few months.

We are actively hiring for developers to join the Mono performance team (ideally in San Francisco, where Mark is based).

Most recently, the team added a new and sophisticated new stack for performance counters which allows us to monitor what is happening on the runtime, and we are now able to export to our profiler (a joint effort between our performance team and our feature team and implemented by Ludovic). We also unified both the runtime and user-defined performance counters and will soon be sharing a new profiler UI.

Posted on 23 Jul 2014

Better Crypto: Did Snowden Succeed?

Snowden is quoted on Greenwald's new book "No Place to Hide" as wanting to both spark a debate over the use of surveillance and to get software developers to adopt and create better encryption:

While I pray that public awareness and debate will lead to reform, bear in mind that the policies of men change in time, and even the Constitution is subverted when the appetites of power demand it. In words from history: Let us speak no more of faith in man, but bind him down from mischief by the chains of cryptography.[...]

The shock of this initial period [after the first revelations] will provide the support needed to build a more equal internet, but this will not work to the advantage of the average person unless science outpaces law. By understanding the mechanisms through which our privacy is violated, we can win here. We can guarantee for all people equal protection against unreasonable search through universal laws, but only if the technical community is willing to face the threat and commit to implementing over-engineered solutions.

Last week Matthew Green asked:

So has Snowden succeeded? Have developers 'created better encryption' since his leaks?

— Matthew Green (@matthew_d_green) May 14, 2014Only time will be able to answer whether as a community the tech world can devise better and simpler tools for normal users to have their privacy protected by default.

Snowden has succeeded in starting an important discussion and having software developers and their patrons react to the news.

At Xamarin we build developer tools for Android and iOS developers. It is our job to provide tools that developers use on a day to day basis to build their applications, and we help them build these mobile applications.

In the last year, we have noticed several changes in our developer userbase. Our customers are requesting both features and guidance on a number of areas.

Developers are reaching to us both because there is a new understanding about what is happening to our electronic communications and also response to rapidly changing requirements from the public and private sectors.

Among the things we have noticed:

- Using Trusted Roots Respectfully: For

years, we tried

to educate

our users on what they should do when dealing with

X509 certificates. Two years ago, most users would

just pick the first option "Ignore the problem".

Today this is no longer what developers do by default. - Certificate Pinning: more than ever, developers are using certificate pinning to ensure that their mobile applications are only talking to the exact party they want to.

- Ciphersuite Selection: We recently had to extend the .NET API to allow developers to force our networking stack to control which cipher suites the underlying SSL/TLS stack uses. This is used to prevent weak or suspected ciphersuites to be used for the communications.

- Request for more CipherSuites: Our Mono runtime implements its own SSL/TLS and crypto stacks entirely in C#. Our customers have asked us to support new cipher suites on newer versions of the standards.

Sometimes developers can use the native APIs we provide to achieve the above goals, but sometimes the native APIs on Android and iOS make this very hard or do not expose the new desired functionality, so we need to step in.

Posted on 21 May 2014

News from the .NET World

Some great announcements today from the Microsoft world.

#1 Open Source ASP.NET stack

The first one is that Microsoft's next generation web stack (ASP.NET vNext) is open source from the ground up, and runs on Mono on both Linux and Mac.

There are plenty of other design principles in this new version of ASP.NET. I provide a translation from Microsoft speak into Unix speak in parenthesis:

- Cloud-ready out of the box (this is code name for "can run with different versions of .NET side by side").

- A single programming model for Web sites and services

- Low-latency developer experience (Refresh on browser recompiles)

- Make high-performance and high-productivity APIs and patterns available - enable them both to be used and compose together within a single app.

- Fine-grained control available via command-line tools and standard file formats.

- Delivered via NuGet (package manager, similar to Node's NPM or Ruby Gems).

- Release as open source via the .NET Foundation.

- Can run on Mono, on Mac and Linux.

Update: And the software is live at http://github.com/aspnet

Client Libraries to Microsoft Services

They are shipping a number of new components to talk to their online services, and they all have a license suitable for being used from platforms other than Windows.

Posted on 12 May 2014

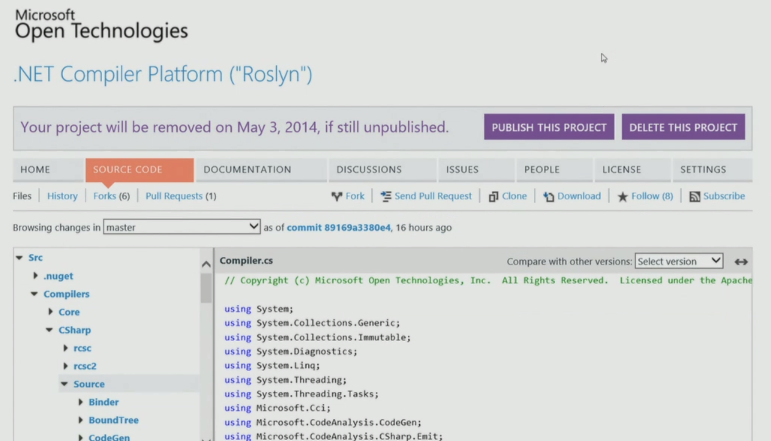

Roslyn Update

As promised, we are now tracking the Unix-friendly Roslyn port on Mono's GitHub Organization.

We implemented a few C# 6.0 features in Mono's C# compiler to simplify the set of patches required to compile Roslyn.

So you will need a fresh Mono (from git).

Posted on 28 Apr 2014

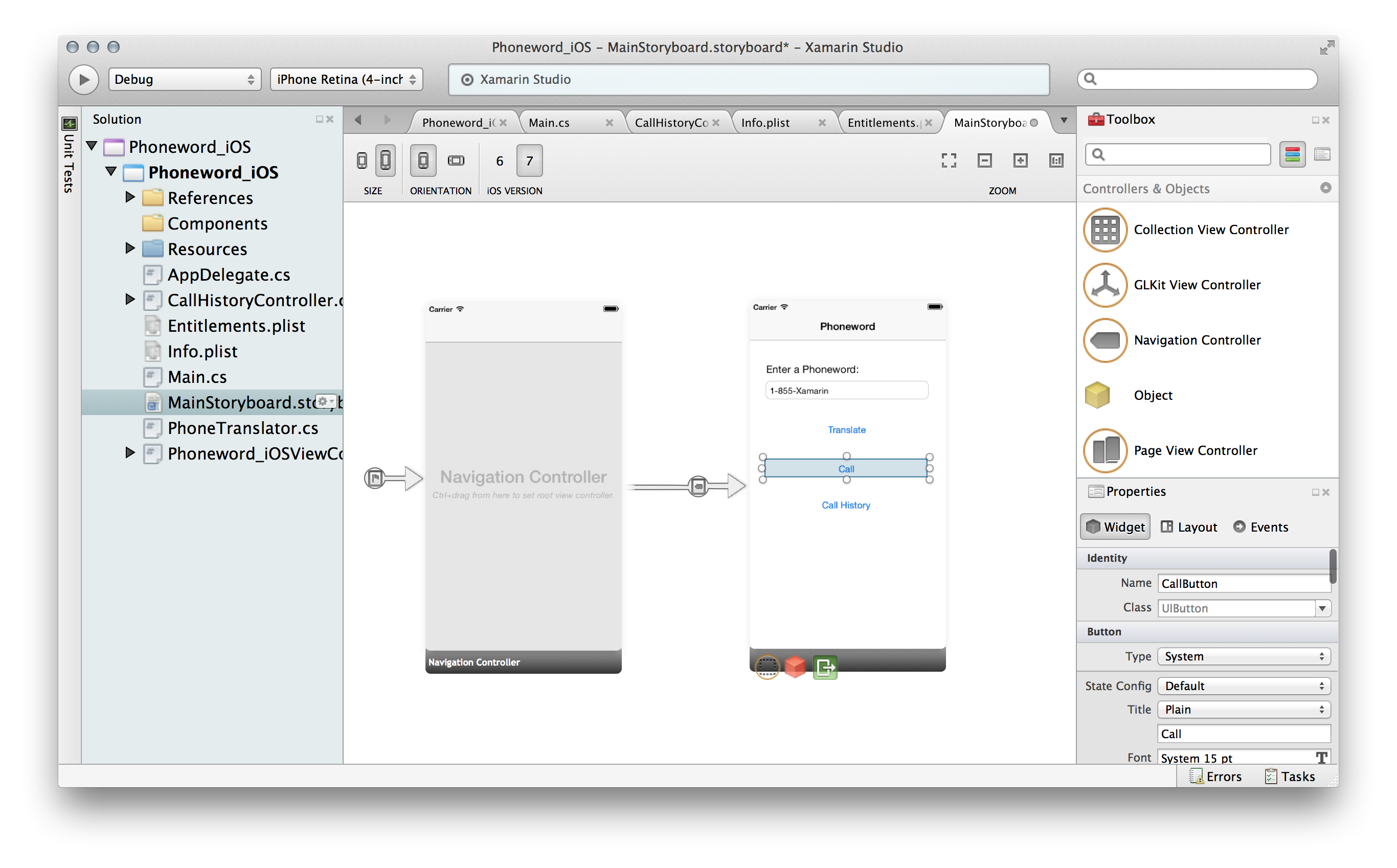

Documentation for our new iOS Designer

The team has put together some beautiful getting started documentation for our iOS User Interface Designer.

In particular, check a couple of hot features on it:

- Using Custom Controls with the designer. Shows how you can render your C# code live in the designer.

- Using AutoLayout with the iOS Designer.

Posted on 14 Apr 2014

Mono on PS4

We have been working with a few PlayStation 4 C# lovers for the last few months. The first PS4 powered by Mono and MonoGame was TowerFall:

We are very excited about the upcoming Transistor, by the makers of Bastion, coming out on May 20th:

Mono on the PS4 is based on a special branch of Mono that was originally designed to support static compilation for Windows's WinStore applications [1].

[1] Kind of not very useful anymore, since Microsoft just shipped static compilation of .NET at BUILD. Still, there is no wasted effort in Mono land!

Posted on 14 Apr 2014

Mono and Roslyn

Last week, Microsoft open sourced Roslyn, the .NET Compiler Platform for C# and VB.

Roslyn is an effort to create a new generation of compilers written in managed code. In addition to the standard batch compiler, it contains a compiler API that can be used by all kinds of tools that want to understand and manipulate C# source code.

Roslyn is the foundation that powers the new smarts in Visual Studio and can also be used for static analysis, code refactoring or even to smartly navigate your source code. It is a great foundation that tool developers will be able to build on.

I had the honor of sharing the stage with Anders Hejlsberg when he published the source code, and showed both Roslyn working on a Mac with Mono, as well as showing the very same patch that he demoed on stage running on Mono.

Roslyn on Mono

At BUILD, we showed Roslyn running on Mono. If you want to run your own copy of Roslyn today, you need to use both a fresh version of Mono, and apply a handful of patches to Roslyn [2].

The source code as released contains some C# 6.0 features so the patches add a bootstrapping phase, allowing Roslyn to be built with a C# 5.0 compiler from sources. There are also a couple of patches to deal with paths (Windows vs Unix paths) as well as a Unix Makefile to build the result.

Sadly, Roslyn's build script depends on a number of features of MSBuild that neither Mono or MonoDevelop/XamarinStudio support currently [3], but we hope we can address in the future. For now, we will have to maintain a Makefile-based system to use Roslyn.

Our patches no longer apply to the tip of Roslyn master, as Roslyn is under very active development. We will be updating the patches and track Roslyn master on our fork moving forward.

Currently Roslyn generates debug information using a Visual Studio native library. So the /debug switch does not work. We will be providing an alternative implementation that uses Mono's symbol writer.

Adopting Roslyn: Mono SDK

Our goal is to keep track of Roslyn as it is being developed, and when it is officially released, to bundle Roslyn's compilers with Mono [6].

But in addition, this will provide an up-to-date and compliant Visual Basic.NET compiler to Unix platforms.

Our plans currently are to keep both compilers around, and we will implement the various C# 6.0 features into Mono's C# compiler.

There are a couple of reasons for this. Our batch compiler has been fine tuned over the years, and for day-to-day compilation it is currently faster than the Roslyn compiler.

The second one is that our compiler powers our Interactive C# Shell and we are about to launch something very interesting with it. This functionality is not currently available on the open sourced Roslyn stack.

In addition, we plan on distributing the various Roslyn assemblies to Mono users, so they can build their own tools on top of Roslyn out of the box.

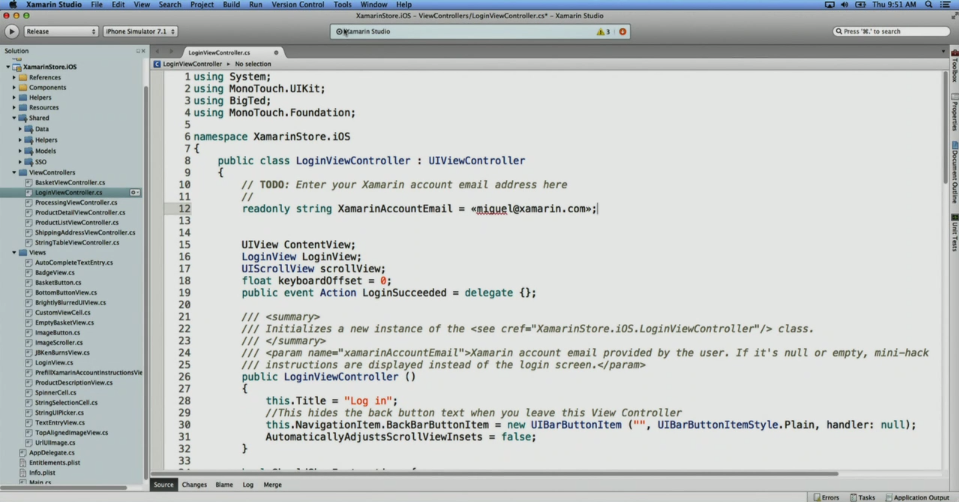

Adopting Roslyn: MonoDevelop/Xamarin Studio

Roslyn really shines for use in IDEs.

We have started an effort to adopt Roslyn in MonoDevelop/Xamarin Studio. This means that the underlying NRefactory engine will also adopt Roslyn.

This is going to be a gradual process, and during the migration the goal is to keep using both Mono's C# compiler as a service engine and bit by bit, replace with the Roslyn components.

We are evaluating various areas where Roslyn will have a positive impact. The plan is to start with code completion [4] and later on, support the full spectrum of features that NRefactory provides (from refactoring to code generation).

C# Standard

While not related to Roslyn, I figured it was time to share this.

For the last couple of months, the ECMA C# committee has been working on updating the spec to reflect C# 5. And this time around, the spec benefits from having two independent compiler implementations.

Mono Project and Roslyn

Our goal is to contribute fixes to the Roslyn team to make sure that Roslyn works great on Unix systems, and hopefully to provide bug reports and bug fixes as time goes by.

We are very excited about the release of Roslyn, it is an amazing piece of technology and one of the most sophisticated compiler designs available. A great place to learn great C# idioms and best practices [5], and a great foundation for great tooling for C# and VB.

Thanks to everyone at Microsoft that made this possible, and thanks to everyone on the Roslyn team for starting, contributing and delivering such an ambitious project.

Notes

[1] Roslyn uses a few tracing APIs that were not available on Mono, so you must use a newer version of Mono to build Roslyn.

[2] We even include the patch to add french quotes that Anders demoed. Make sure to skip that patch if you don't want it :-)

[3] From Michael Hutchinson:

- There are references of the form:

<Reference Include="Microsoft.Build, Version=$(VisualStudioReferenceAssemblyVersion), Culture=neutral, PublicKeyToken=b03f5f7f11d50a3a">

This is a problem because our project system tries to load project references verbatim from the project file, instead of evaluating them from the MSBuild engine. This would be fixed by one of the MSBuild integration improvements I've proposed. - There's an InvalidProjectFileException error from the xbuild engine when loading one of the targets files that's imported by several of the code analysis projects, VSL.Settings.targets. I'm pretty sure this is because it uses MSBuild property functions, an MSBuild 4.0 feature that xbuild does not support.

- They use the

AllowNonModulatedReferencemetadata on some references and it's completely undocumented, so I have no idea what it does and what problems might be caused by not handling it in xbuild. - One project can't be opened because it's a VS Extension project. I've added the GUID and name to our list of known project types so we show a more useful error message.

- A few of the projects depend on Microsoft.Build.dll, and Mono does not have a working implementation of it yet. They also reference other MSBuild assemblies which I know we have not finished.

[4] Since Roslyn is much better at error recovery and has a much more comprehensive support for code completion than Mono's C# compiler does. It also has much better support for dealing with incremental changes than we do.

[5] Modulo private. They use

private everywhere, and that is just plain ugly.

[6] We will find out a way of selecting which compiler to use,

either mcs (Mono's C# Compiler) or Roslyn.

Posted on 09 Apr 2014

ISO C++ 2D API

Herb Sutter from the ISO C++ group, reached out to the Cairo folks:

We are actively looking at the potential standardization of a basic 2D drawing library for ISO C++, and would like to base it on (or outright adopt, possibly as a binding) solid prior art in the form of an existing library.

And also:

we are focused on current Cairo as a starting point, even though it's not C++ -- we believe Cairo itself it is very well written C (already in an OO style, already const-correct, etc.).

Congratulations to the Cairo guys for designing such a pleasant to use 2D API.

But this would not be a Saturday blog post without pointing out that Cairo's C-based API is easier and simpler to use than many of those C++ libraries out there. The more sophisticated the use of the C++ language to get some performance benefit, the more unpleasant the API is to use.

The incredibly powerful Antigrain sports an insanely fast software renderer and also a quite hostile template-based API.

We got to compare Antigrain and Cairo back when we worked on Moonlight. Cairo was the clear winner.

We built Moonlight in C++ for all the wrong reasons ("better performance", "memory usage") and was a decision we came to regret. Not only were the reasons wrong, it is not clear we got any performance benefit and it is clear that we did worse with memory usage.

But that is a story for another time.

Posted on 04 Jan 2014

Debugging Remote Mono Targets

Few guys have approached us recently about doing remote debugging of a Mono process. Typically this involves an underpowered system, or some kind of embedded system running Mono, and a fancy Mac or PC on the other end.

These are the instructions that Michael Hutchinson kindly provided on how to remotely debug your process using either Xamarin Studio or MonoDevelop:

Remote debugging is actually really easy with the Mono soft debugger. The IDE sends commands over TCP/IP to the Mono Soft Debugger agent inside the runtime. Depending how you launch the debuggee, you can either have it connect to the IDE over TCP, or have it open a port and wait for the IDE to connect to it.For simple prototyping purposes, you can just set the

MONODEVELOP_SDB_TESTenv var, and a new "Run->Run With->Custom Soft Debugger" command will show up in Xamarin Studio / MonoDevelop, and you can specify an arbitrary IP and port or connect or or listen on, and optionally a command to run. Then you just have to start the debuggee with the correct--debugger-agentarguments (see the Mono manpage for details), start the connection, and start debugging.For a production workflow, you'd typically create a MonoDevelop addin with a debugger engine and session subclassing the soft debugger classes, and overriding how to launch the app and set up the connection parameters. You'd typically have a custom project type too subclassing the DotNetProject, so you could override how the project was built and executed, and so that the new debugger engine could be the primary debugger for projects of that type. You'd get all the default .NET/Mono project and debugger functionality "for free".

You can get some inspiration on how to build your own add-in from the old MeeGo add-in. It has bitrotted, since MeeGo is no more, but it is good enough as a starting point.

Posted on 29 Oct 2013

Hiring Developers

I am hiring software developers.

We are growing our Xamarin Studio/MonoDevelop, Visual Studio, iOS and Android teams.

Ideally, you are a C# programmer and ideally, you relocate to Boston, MA. But we can work with remote employees

Posted on 22 Aug 2013

« Newer entries | Older entries »

Blog Search

Archive

- 2024

Apr Jun - 2020

Mar Aug Sep - 2018

Jan Feb Apr May Dec - 2016

Jan Feb Jul Sep - 2014

Jan Apr May Jul Aug Sep Oct Nov Dec - 2012

Feb Mar Apr Aug Sep Oct Nov - 2010

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2008

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2006

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2004

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2002

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Dec

- 2022

Apr - 2019

Mar Apr - 2017

Jan Nov Dec - 2015

Jan Jul Aug Sep Oct Dec - 2013

Feb Mar Apr Jun Aug Oct - 2011

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2009

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2007

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2005

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2003

Jan Feb Mar Apr Jun Jul Aug Sep Oct Nov Dec - 2001

Apr May Jun Jul Aug Sep Oct Nov Dec