Road to MonoDevelop 2.4: Navigation

Perhaps my favorite feature in MonoDevelop 2.4 is the "Navigate To" functionality that we added. This new feature is hooked to Control-comma on Linux and Windows and to Command-. on Mac.

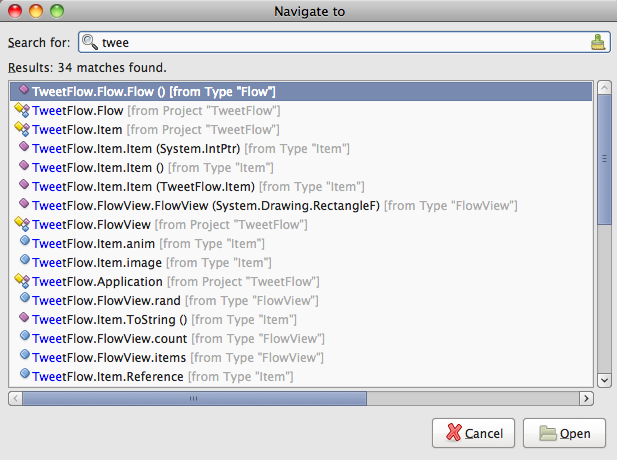

This feature lets you quickly jump to a file, a class or a method:

I used to navigate between my files by opening then from the solution explorer, and then using Control-tab or the tabs. Needless to say, the old way just felt too clumsy for a fast typist like me.

With Navigate-To, I can just hit the hotkey and then like I would in Emacs, type the filename, type or method and jump directly to the method. This is fabulous.

This will also search substrings, so you can either start typing the name of a method or you can fully qualify the method (for example: "Vector.ToString").

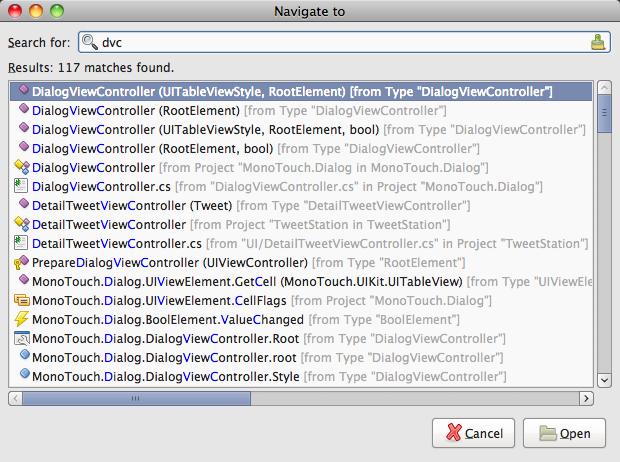

But it gets better, you can use abbreviations, so instead of typing "DialogViewController" you can just type "dvc":

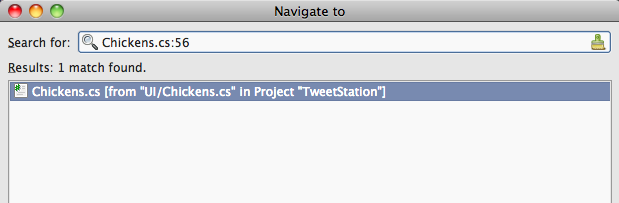

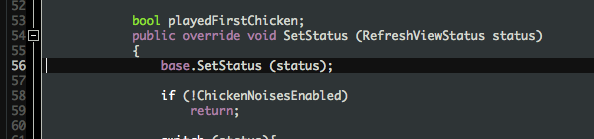

BREAKING UPDATE Michael just told me of a cute extra feature: if your match is for a filename, if you type ":NUMBER" it will jump to the selected file to the specified line number.

The result:

Posted on 14 Jun 2010

Conditional Attribute

One cute feature of C# 1.0 was the introduction of the Conditional attribute. When you apply the conditional attribute to a method, calls to this method are only included in the resulting code if the appropriate define is set.

For instance, consider:

[Conditional ("DEBUG")]

void Log (string format, params object [] args)

{

Console.WriteLine (String.Format (format, args));

}

Calls to Foo.Log become no-ops unless you pass the -define:DEBUG command line option to the compiler.

What is interesting about this compiler-supported feature is that that the code inside the method Log is checked for errors during compilation as any other regular method. They are first class citizens.

Posted on 12 Jun 2010

My first iPhone app

I am proud to introduce my first iPhone App built entirely using standard HTML5 technologies.

I felt that I had to go with HTML 5 as I did not want to write the app once for the iPhone and once for the Android. I am also open sourcing this application in its entirety, to help future generation of mobile HTML 5 developers learn from my experiences and hopefully help them write solid, cross platform mobile applications using HTML 5.

I use this app every time we go to a pub, or when we are having lunch at the Cambridge Brewing Company.

Before you check it out, my lawyer has advised me that I need to add the following disclaimer:

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

I give you: iCoaster.

Stay tuned for my Cheese Table app, coming soon to the iPad.

What People Are Saying about iCoaster!

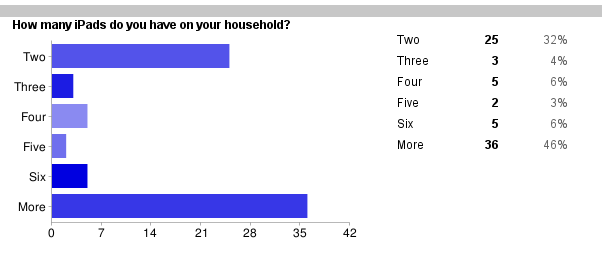

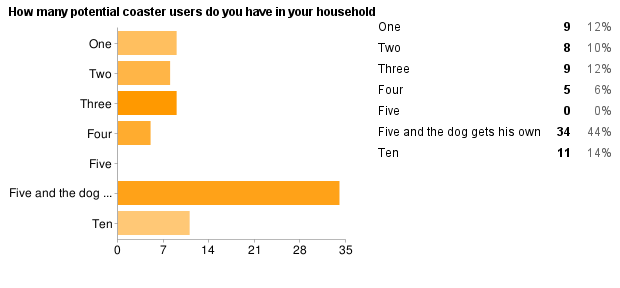

From my survey on iCoaster for iPad:

"I am currently resorting to use my four iPhones to create one giant iCoaster; this is ridiculous. Need iCoaster HD!!!!""Buying an iPad every time I want to put a drink down is expensive, though it is better than getting those little rings all over my shiny new table."

"Four coasters per iPad would be ideal"

"Please keep up the great work. Not sure what I would do without this app, would at least have a lot of ruined coffee tables."

Sadly, we do have some bugs being reported, and I can assure everyone that I am working around the clock to fix these issues. Ensuring that iCoaster is reliable is my top priority:

"If I put my drink on iCoaster, and then rotate my phone to the landscape orientation, my drink gets spilled onto my lap. Please fix this in the iPad version."

Survey Results as of 14:50 EST

Updates

Update: We have confirmation that it works on WebOS and Blackberry as well. HTML5 FTW!

Update 2: Due to popular demand, I am launching an effort to bring iCoaster to the iPad. If you want to participate in the beta for iCoasterHD, please fill in this survey, your feedback will help me prioritize features.

Update 3: iCoaster works on walls too: http://yfrog.com/c9wlkogj.

Update 4: For those of you complaining about the missing DOCTYPE Geoff Norton has done what any honorable open source contributor would have done: he forked iCoaster and made it HTML "compliant": icoaster-fork.

Update 5: After today's brouhaha over Apple's HTML 5, Mike Shaver from Mozilla talks about the elephant in the room.

Update 6: iCoaster's HTML 5 fork has been forked to support full-screen on WebKit and Opera browsers.

Update 7: We have a Silverlight port! This enables iCoaster to run on the upcoming Windows Compact Edition Media 7 Series Phone Embedded Release Plus Pro Advanced.

Update 8: We got a Unity port of iCoaster, and showing true open source spirit, sethillgard open sourced the code.

Posted on 04 Jun 2010

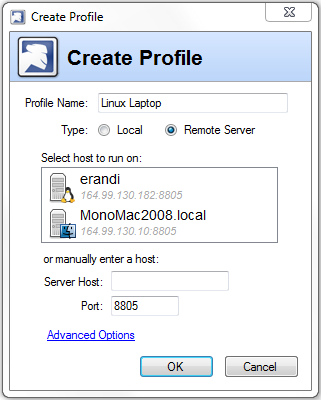

First Beta of MonoTools 2 for VisualStudio

Last week we released our first beta for the upcoming MonoTools2.

There are four main features in MonoTools 2:

- Soft debugger support.

- Faster transfer of your program to the deployment system.

- Support for Visual Studio 2010 in addition to 2008.

- Polish, polish and more polish.

Getting Started

Download our betas from this page. On Windows, you would install our plugin for either 2008 or 2010 and you need to install Mono 2.6.5 on the target platform (Windows, Linux or MacOS).

On Linux, run `monotools-gui-server' or `monotools-server', this will let Visual Studio connect to your machine. Then proceed from Windows.

On MacOS, double click on the "MonoTools Server" application.

Once you run those, MonoTools will automatically show you the servers on your network that you can deploy to or debug:

ASP.NET debugging with this is a joy!

Soft Debugger

This release is the first one that uses our new soft debugger. With is a more portable engine to debug that will allow our users to debug targets other than Linux/x86 for example OSX and Windows.

This is the engine that we use on MonoTouch and that we are using for Mono on Android.

Our previous debugger, a hard debugger, worked on Linux/x86 systems but was very hard to port to new platforms and to other operating systems. With our new soft debugger we can debug Mono applications running on Windows, helping developers test before moving to Linux.

Faster Transfers

When you are developing large applications or web applications, you want your turn around time from the time that you run hit Run to the site running on Linux to be as short as possible.

Cheap Shot Alert: When dealing with large web sites, we used to behave like J2EE: click run and wait for a month for your app to be loaded into the application server.

This is no longer the case. Deployments that used to take 30 seconds now take 2 seconds.

Support for Visual Studio 2010

Our plugin now supports the Visual Studio 2010 new features for plugin developers.

This means you get a single .vsix package to install, no system installs, no registry messing around, no dedicated installers, none of that.

The full plugin installs in 3 seconds. And you can remove it just as easily.

Then you can just from VS's Mono menu pick "Run In Mono" and pick whether you want to run locally or remotely.

We now also support multiple profiles, so you can debug from Visual Studio your code running on Linux boxes, Mac boxes or your local system.

Polish and more Polish

MonoTools was our first Windows software and we learned a lot about what Windows developers expected.

We polished hundreds of small usability problems that the community reported in our last few iterations. You can also check our release notes for the meaty details.

Appliances

And we integrate directly with SuseStudio to create your ready-to-run appliances directly from Visual Studio.

Posted on 03 Jun 2010

Linux for Consumers: MeeGo Updates

Excited to see the work happening on the Linux consumer space in the MeeGo Universe. There is now a MeeGo 1.0 download available for everyone to try out.

At Novell we have been contributing code, design and artwork for this new consumer-focused Linux system and today both Michael Meeks and Aaron Bockover blog about the work that they have been doing on MeeGo.

These screenshots are taken directly from Aaron's and Michael's blog posts. Aaron discusses the new UI for the music player Banshee and Michael discusses the new UI for the Email/Calendar program.

Media Panel in MeeGo

You can still get access to the full Banshee UI themed appropriately:

Themed MeeGo UI for Banshee

Check Aaron's blog for the details on the design process and the new features coming out for it.

Then, on the Evolution side of things Michael discusses Evolution Express a renewed effort to make Evolution suitable for netbooks. The work done there is amazing, here are some screenshots:

Evolution Express on MeeGo

Just like Banshee, Evolution now integrates with MeeGo panels, like this:

Summary of your tasks on the MeeGo Panel

This is what the new preferences panel looks like:

Themed MeeGo UI for Banshee

And finally the calendar:

Evolution Express Calendar on MeeGo

Evolution Express Calendar on MeeGo

Third Party Applications

You can also run existing Mono applications on MeeGo. I give you the photo management application F-Spot:

F-Spot on MeeGo

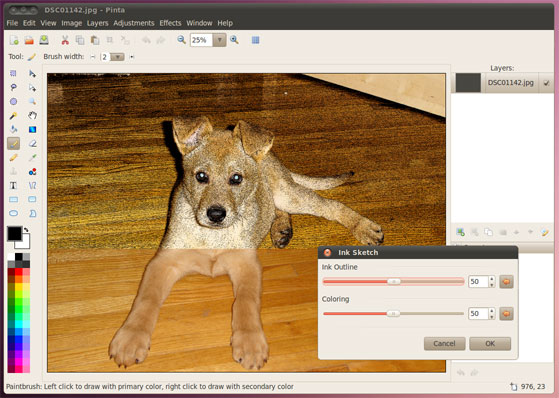

And this is Jonathan's Pinta painting program built on Mono with Gtk#:

The Mono-based paint program Pinta

Pinta is fascinating as it shows how much punch can be packed by CIL code. Pinta and all of its effects use 328k of disk space and for distribution it only takes 108k of disk space.

Development Tools

And we are of course very happy to be supporting developers that want to target MeeGo using Windows, Mac or Linux with our MonoDevelop plugin for MeeGo.

If you are more of a Visual Studio developer, our upcoming MonoTools for Visual Studio 2.0 will also support developing applications for MeeGo from Windows.

MeeGo

I am blown away by the way that everyone involved in MeeGo has been able to execute on the vision of bringing Linux to the consumer space by the way of the netbook.

Kudos to everyone involved.

Posted on 27 May 2010

MonoDroid - Mono for Android Beta Program

We are hard at work on MonoDroid -- Mono for Android -- and we have created a survey that will help us prioritize our tooling story and our binding story.

If you are interested in Monodroid and in participating on the beta program, please fill out our Monodroid survey.

Here is what you can expect from Mono on Android:

- C#-ified bindings to the Android APIs.

- Full JIT compiler: this means full LINQ, dynamic, and support for the Dynamic Language Runtime (IronPython, IronRuby and others).

- Linker tools to ship only the bits that you need from Mono.

- Ahead-of-Time compilation on install: when you install a Monodroid application, you can have Mono precompile the code to avoid any startup performance hit from your application.

We are still debating a few things like:

- Shared Full Mono runtime vs embedded/linked runtime on each application.

- Which IDE and OS to make our primary developer

platform. Our options are VS2010, VS2008 and

MonoDevelop and the platforms are Windows, OSX and

Linux.

We are currently leaning towards using VS2008/2010 for Windows during the beta and later MonoDevelop on Linux/Mac.

Like we did with MonoTouch, we will bring developers into the preview in batches of 150 developers, please enter enough information on the "comments" section if you want to be in the early batches.

Posted on 21 May 2010

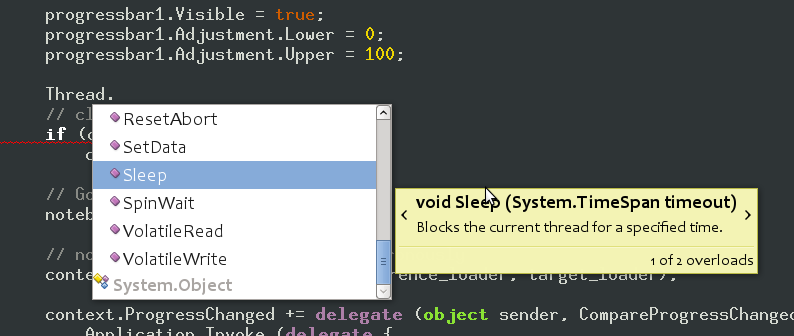

Group Completion in MonoDevelop 2.4

In our Beta for MonoDevelop 2.4 we introduced a new feature designed to help developers better explore a new API.

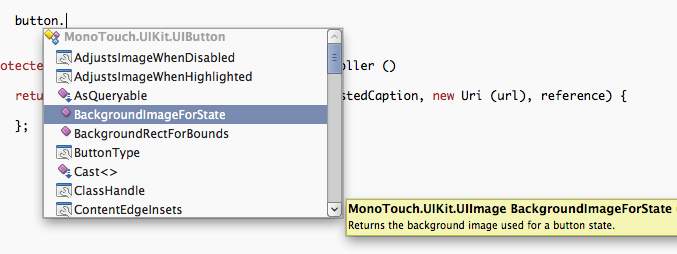

Many developers use the IDE code-completion as a way of quickly navigate and explore an API. There is no need to flip through the documentation or Google for every possible issue, they start writing code and instantiating classes and the IDE offers completion for possible methods, properties and events to use as well as small text snippets describing what each completion does as well as parameter documentation:

With MonoTouch, we were dealing with a new type hierarchy, with new methods. We found that our users wanted to explore the API through code completion, but they wanted more context than just the full list of possible options at some point.

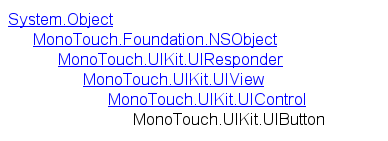

For example, the UIButton class has this hierarchy:

Looking through the methods, properties and events of this class can be confusing, as for the UIButton class there were some 140 possible completions that came from the complete hierarchy. Sometimes the user knows that the method they want is a button-specific method, and as fascinating as UIResponder, UIView or UIControl might be, the method they are looking for is not going to be there.

With MonoDevelop 2.4 we introduced a new shortcut during code completion that changes the completion order from alphabetic to grouped by type, with completions from the most derived type coming up first:

To switch between the modes you use Control-space when the popup is visible. You can use shift-up and shift-down to quickly move between the groups as well.

I have been using this feature extensively while exploring new APIs.

Posted on 09 May 2010

MonoDevelop's New Search Bar

MonoDevelop 2.4 was a release in which we focused on improving the ergonomics of the IDE. We did this in dozens of places and we did this by dogfooding the IDE and comparing it to other tools and environments that we have been using.

With MonoDevelop 2.0 and earlier we used a dialog box like most other GUI applications from 2005. The dialog would remain on top of the text and the user would press next, and move the dialog box around as the matches were found.

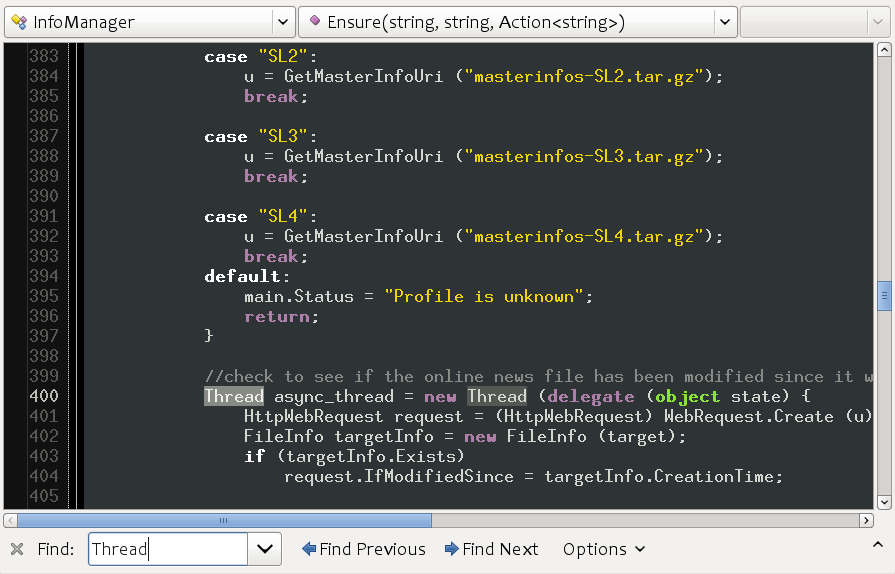

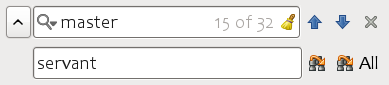

In the current MonoDevelop (2.2) we adopted a more Firefox-like UI, this is what the search bar looks like:

We have a relatively big bar at the bottom of the screen, big labels, a drop down for picking previously searched items and an options menu that would let users pick manually case sensitivity, whole world matching or toggling regular expressions.

This took too much space, in a prime location of screen real estate. Additionally, the features although present were hard to pick. You would start an incremental search with Control-f but if you wanted to change the settings, you would have to use the mouse to access the options menu. If you later changed your mind, you would have to change the defaults again.

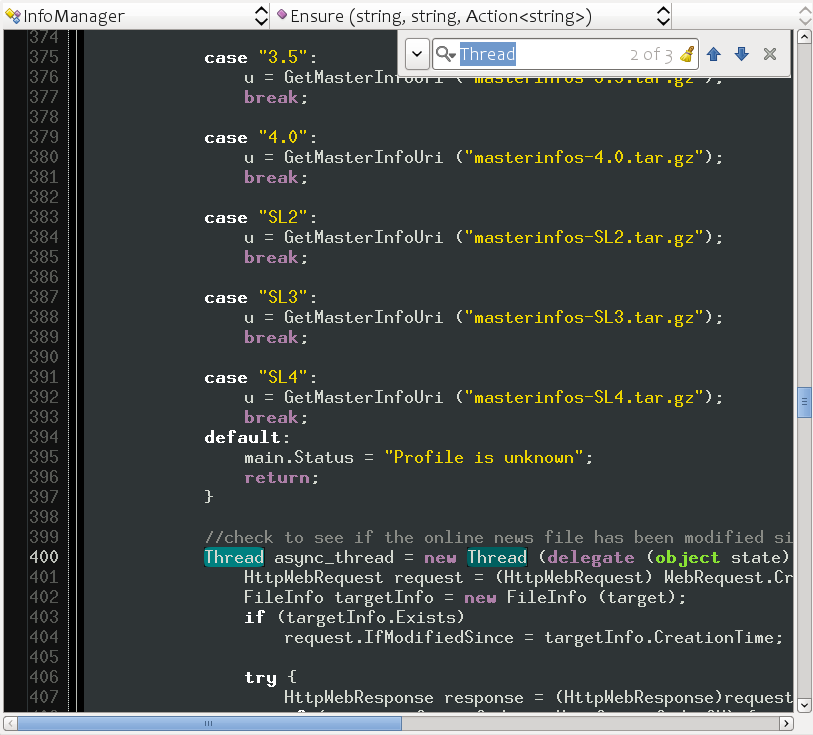

With the new release of MonoDevelop (2.4) we have changed this again, this time adopting the Google Chrome search bar and done a few other usability changes:

The first thing to notice is that instead of taking valuable horizontal space in the form of a full row, we now only take a corner of the screen, and we take it on the top right corner which is less likely to contain the information you are looking for as you search forward.

Case sensitivity searches now use the same model used by Emacs. If you start searching for a term and you type only lowercase letters, the search will be case insensitive.

Searching for "thread" will match "thread", "Thread" or "THREAD". But if at any point during the search you type an uppercase letter, then the search for this particular activation will switch into case-sensitive search. Searching for "Thread" will only match the word "Thread" and not "thread" or "THREAD".

And we also highlight matches like some Mac applications do, all matching words in the screen are highlighted, and the current match gets both a brighter color as well as a bubble that inflates and deflates on every match.

The replace functionality is built into this new UI, and is accessed either with a hotkey (Control-H) or by clicking on the left-side icon:

Just like Google Chrome, we use a watermark to show the number of matches in the document.

MonoDevelop 2.4 is packed with new features, and I hope to blog about some of the design decisions of the new feature as time permits. In the meantime, check out the list of new features in the Beta for MonoDevelop 2.4.

Posted on 06 May 2010

Pinta 0.3 is out

Jonathan Pobst has announced the release of Pinta 0.3:

Live Preview

Live Preview

Pinta is a lightweight paint application for Linux, OSX and Windows written entirely in C# and using the Gtk# toolkit for its user interface. From the FAQ:

Is Pinta a Port of Paint.NET?Not really, it's more of a clone. The interface is largely based off of Paint.NET, but most of the code is original. The only code directly used by Pinta is for the adjustments and effects, which is basically a straight copy from Paint.NET 3.0.

Regardless, we are very grateful that Paint.NET 3.0 was open sourced under the MIT license so we could use some of their awesome code.

Jonathan further adds that this release is very close to feature parity with the original Paint.NET.

Posted on 03 May 2010

CLI on the Web

In the last few days Joe Hewitt has been lamenting the state of client side web development technologies on twitter. TechCrunch covered the progress in their The State Of Web Development Ripped Apart In 25 Tweets By One Man.

Today Joe followed up with a brilliant point:

joehewitt: If CLI was the ECMA standard baked into browsers instead of ECMAScript we'd have a much more flexible web: http://bit.ly/sLILI

ECMA CLI would have given the web both strongly typed and loosely typed programming languages. It would have given developers a choice between performance and scriptability. A programming language choice (use the right tool for the right job) and would have in general made web pages faster just by moving performance sensitive code to strongly typed languages.

A wide variety of languages would have become first-class citizens on the web client. Today those languages can run, but they can run in plugin islands. They can run inside Flash or they can run inside Silverlight, but they are second class citizens: they run on separate VMs, and they are constrained on how they talk to the browser with very limited APIs (only some 20 or so entry points exist to integrate the browser with a plugin, and most advance interoperability scenarios require extensive hacks and knowing about a browser internal).

Perhaps the time has come to experiment embedding the ECMA CLI inside the browser. Not as a separate plugin, and not as a plugin island, but as a first-class VM inside the browser. Running side-by-side to the Javascript engine.

Such an effort would have two goals:

- Access to new languages inside the browser, optionally statically typed for major performance boosts.

- A new platform for innovating on the browser by providing a safe mechanism to extend the browser APIs.

We could do this by linking Mono directly into the browser. This would allow developers to write code like this:

<script language="csharp">

from email in documents.ElementsByTag ("email")

email.Style.Font = "bold";

</script>

Or pulling the source from the server:

<script language="csharp" source="ImageGallery.cs"></script>

We could replace `csharp' with any of the existing open sourced compilers (C#, IronPython, IronRuby and others).

Or alternatively, if users did not want to distribute their source and instead ship a compact binary to the end users, they could embed the binary CIL directly:

<script language="cil" source="MailApp.dll"></script>

Pre-emptive answer to the "view-source" crowd: you can use .NET Reflector to look at the source code of a compiled binary. If it is obfuscated, you will enjoy reading that as much as you would enjoy reading any Javascript in the real web.

Embedding the CIL on the browser would create isolated execution environments for each page (AppDomain in ECMA parlance) and sandbox the execution system.

The security model exposed by Silverlight exposes exactly what is needed to go beyond a language-neutral runtime. The security model distinguishes between untrusted code which is subject to verification, strict requirements on what it can do and limits what they can do from "platform" code that is trusted.

Trusted platform code is granted special permissions that untrusted code is not given. The runtime enforces that no untrusted code can call into any security sensitive and protected areas.

This would allow browser vendor to expose new APIs that get full access to the underlying operating system (for example getting direct access to special hardware on the system like microphones and camera) while enforcing that the user code accesses them only through safe gateways.

This is very important to allow developers to try out new trusted APIs: new UI models, rendering systems and APIs built entirely on the same core.

I am absolutely fascinated by the idea and I only regret not having come up with it. We have been too focused on the Moonlight-as-a-plugin to take a step back and think in more general terms: how can we use the ECMA CIL engine for *all* applications without a browser plugin.

Joe like many of us is conflicted between the power of reach that the web has versus the polish and speed that native GUI toolkits deliver.

Althought Silverlight provides a nice UI system inside the plugin, Joe's point is that we need a platform in which we can more quickly innovate new UI ideas, and probably completely different new ideas quickly. And with the ECMA sandbox model we can start testing new ideas without waiting for browser vendors to add the features themselves and we can make the integration between these plugins and the browser stronger than they have ever been.

The plugin model does not provide the necessary tools to drive more innovation in the web. We need a new model, and I am ready to start prototyping Joe's idea.

The only question is what browser to target first Firefox or Chrome.

Gestalt

Gestalt allows developers to use the CLI inside the browser and it shows what can be done with other languages in the browsers. It requires Silverlight to run and the interaction between the code and the browser is limited in the level of integration that can be achieved via the browser-plugin interface.

This solves only the half the problem: multi-languages and does it in a limited way.

Resources

You can learn more about the security model in my previous blog post.

Our open source Silverlight implementation.

Posted on 03 May 2010

« Newer entries | Older entries »

Blog Search

Archive

- 2024

Apr Jun - 2020

Mar Aug Sep - 2018

Jan Feb Apr May Dec - 2016

Jan Feb Jul Sep - 2014

Jan Apr May Jul Aug Sep Oct Nov Dec - 2012

Feb Mar Apr Aug Sep Oct Nov - 2010

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2008

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2006

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2004

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2002

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Dec

- 2022

Apr - 2019

Mar Apr - 2017

Jan Nov Dec - 2015

Jan Jul Aug Sep Oct Dec - 2013

Feb Mar Apr Jun Aug Oct - 2011

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2009

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2007

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2005

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec - 2003

Jan Feb Mar Apr Jun Jul Aug Sep Oct Nov Dec - 2001

Apr May Jun Jul Aug Sep Oct Nov Dec